March 26, 2026

If All of Recruiting Could Be Automated, Where Do We Draw the Line?

The AI question in Talent Acquisition (TA) has shifted. With 91% of TA teams using AI regularly, recruiting leaders aren’t debating whether to adopt AI; the debate is where and how to deploy it for maximum value, and the existential question that’s emerging from that debate is:

What should remain human vs automated? What is the role of the recruiter in an AI-enabled TA function?

In conversations with Heads of TA across the Recruiting Leadership Council, this is one of the questions surfacing most consistently. The teams most at risk aren’t necessarily the ones moving slowly. Rather, it’s the ones automating without intention that are at most at risk. Talent Acquisition functions that endure won’t be the ones that automate the most or fastest. They’ll be the ones who know exactly where to preserve human uniqueness and automated efficiency.

The ‘Could’ vs. ‘Should’ Problem

‘Could’ is a technology question, and from the pace of AI advances it seems likely that we’ll have the capability to automate increasingly large swaths of recruiters’ workflows. ‘Should,’ however, is a strategic workforce planning question and the more important one for leaders.

Recruiting, at its best, is about building relationships. When automation becomes integrated into that process, it doesn’t just change how work gets done. It also has potential to change the work itself and who is accountable for its outcomes. And accountability isn’t just about explainability, it’s about ownership. Someone needs to be able to stand behind a hiring decision, defend it, and be responsible if it’s wrong.

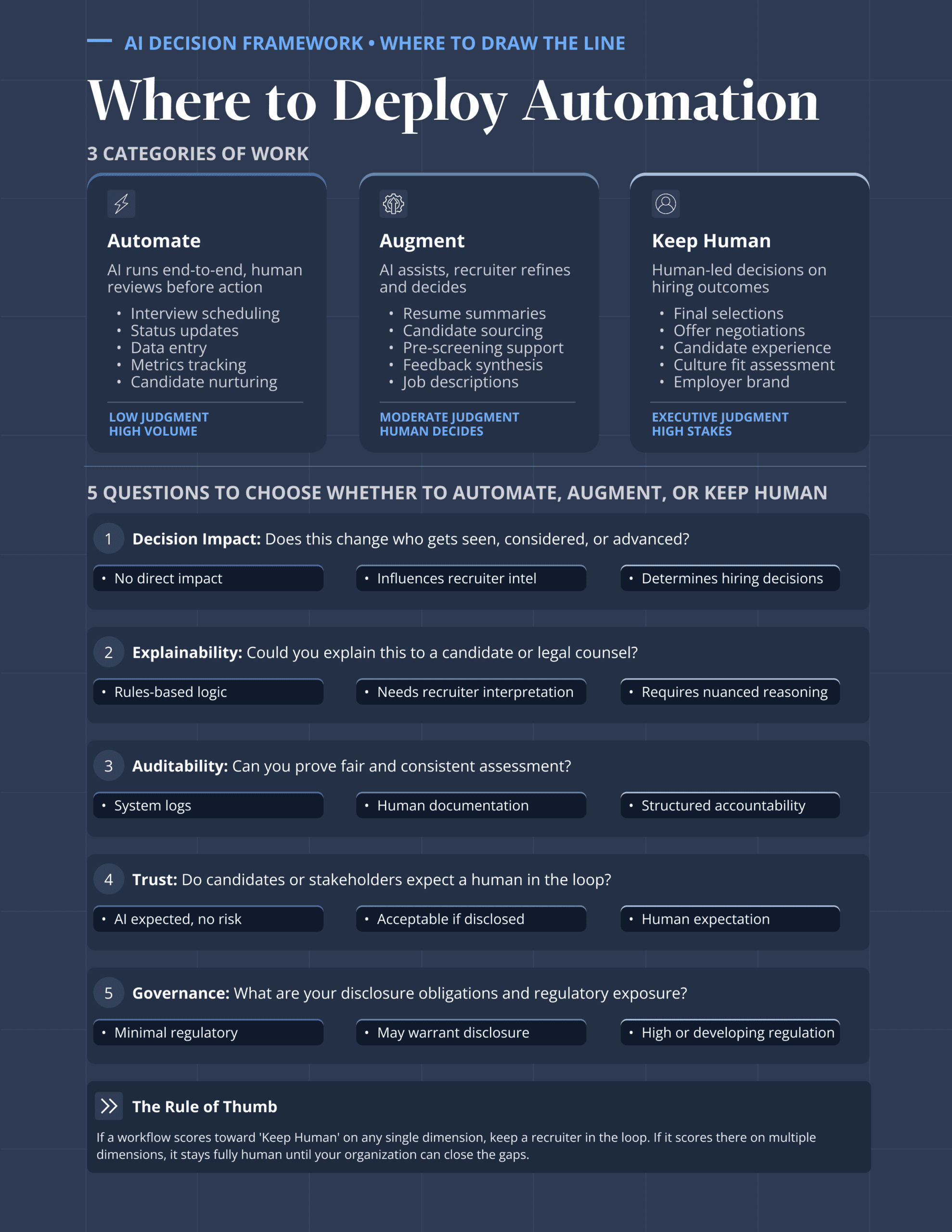

That’s the crux. Every generation of technology reshapes a profession: what it’s for, what it’s worth, what only it can do. AI is doing that to recruiting now. The question isn’t whether to engage with it — it’s how to engage with it wisely. That starts with a framework for deciding what to Automate, what to Augment, and what to keep irreducibly Human.

Automation: Where the Returns Are Too High to Ignore

A significant portion of recruiting work is genuinely well-suited for automation, and many teams are already piloting new use cases to get it there.

Interview scheduling. Job description generation. Hiring manager status updates. Candidate communication triggers. Data entry and system updates. These workflows share a common profile: simple judgment, high volume work, low error cost. AI running these end-to-end doesn’t compromise the process. Rather, it clears the path for recruiters to focus on work that actually requires human judgment.

Automation won’t eliminate the recruiter role. It will simply raise the bar for it. As sourcing, scheduling, and coordination become automated, recruiter value shifts toward interpretation, advisory judgment, and influencing hiring decisions.

The signal: when the work is repetitive, the stakes are low, and the output is measurable, you’re not protecting anything by keeping a human in the loop. This likely belongs in automation.

Augmentation: Where AI Accelerates Human Decision-Making

In an ideal state, augmentation means AI does the heavy lifting, but it is the recruiter who interprets, validates, and acts on the output. A growing share of TA teams are entering this territory, thoughtfully..

Resume summarization. Candidate research and sourcing. Pre-screening against requirements. Interview feedback synthesis. Market and compensation benchmarking. In each case, AI accelerates what historically took hours, and then the human decides next steps.

This is also where conversations with Heads of TA surfaced nuanced findings. The leaders navigating this well are explicit about the boundary internally: ‘AI segments, recruiters select.’ Pre-screening outputs are treated as inputs to a decision, not the decision itself. The ones running into friction are the ones who let that distinction blur.

The signal: if a recruiter needs to interpret the output before acting on it, you’re in augment territory.

Keep Human: Where Risk Isn’t Offset by Automation Gains

Matching. Prioritization. Selection-adjacent decisions. These are the workflows where a wrong call hurts efficiency and trust, and where the accountability gap is most likely to open.

For most TA leaders, matching is consistently treated as higher-risk territory. Teams see a clear path on pre-screening and summarization, but matching is where legal scrutiny intensifies. Several leaders have formally restricted AI use in selection processes, not because the technology lacks capability, but because the visibility and oversight around its decision-making isn’t yet sufficient to warrant that trust..

The rollback cost is real. When a candidate gets auto-declined by an algorithm–or even what simply feels like a bot making a decision–the employer brand can take a hit. Final candidate selection, offer decisions, sensitive conversations, diversity and equity judgment calls: these belong to a human. Not because AI can’t process the variables, but because someone needs to be able to stand behind the outcome.

The signal: when stakes are high, situations are nuanced, or when a wrong call has consequences you can’t walk back, keeping a human in the loop isn’t a limitation of the technology, it’s smart practice.

A Framework for Drawing the Line

The three categories above (Automate, Augment, Keep Human) provide the architecture. The five questions below give the sorting mechanism. Run any workflow through them, and where it lands tells you where it belongs.

- Does this decision change who gets seen or selected?

- Can the output be explained and defended if challenged?

- Is there a human who can be held accountable for the outcome?

- What’s the cost of a wrong call, to the candidate, to the brand, to the organization?

- Does your legal and governance structure support this level of automation today?

Intentionality Is the Strategy

The right answer will look different for every organization: different scale, different risk tolerance, different regulatory exposure, different candidate populations. There is no universal line.

What matters is drawing it with intention, before you need to defend it. Before legal asks. Before a governance committee reverses a live implementation. Before a candidate asks why their application disappeared.

Automation should free recruiters to do the work only humans can do, and do best. But TA teams must remain accountable for every decision that determines whether someone gets a fair shot.

Continue the conversation.

If you’re calibrating where automation fits in your workflow, the fastest way to de-risk the decision is to validate it against firsthand perspective from peers who are navigating the same terrain. The Recruiting Leadership Council provides Heads of Talent Acquisition with access to peer-to-peer talent intelligence on demand, ensuring no leader has to start from scratch.